Splio Customer Data Platform Main concepts

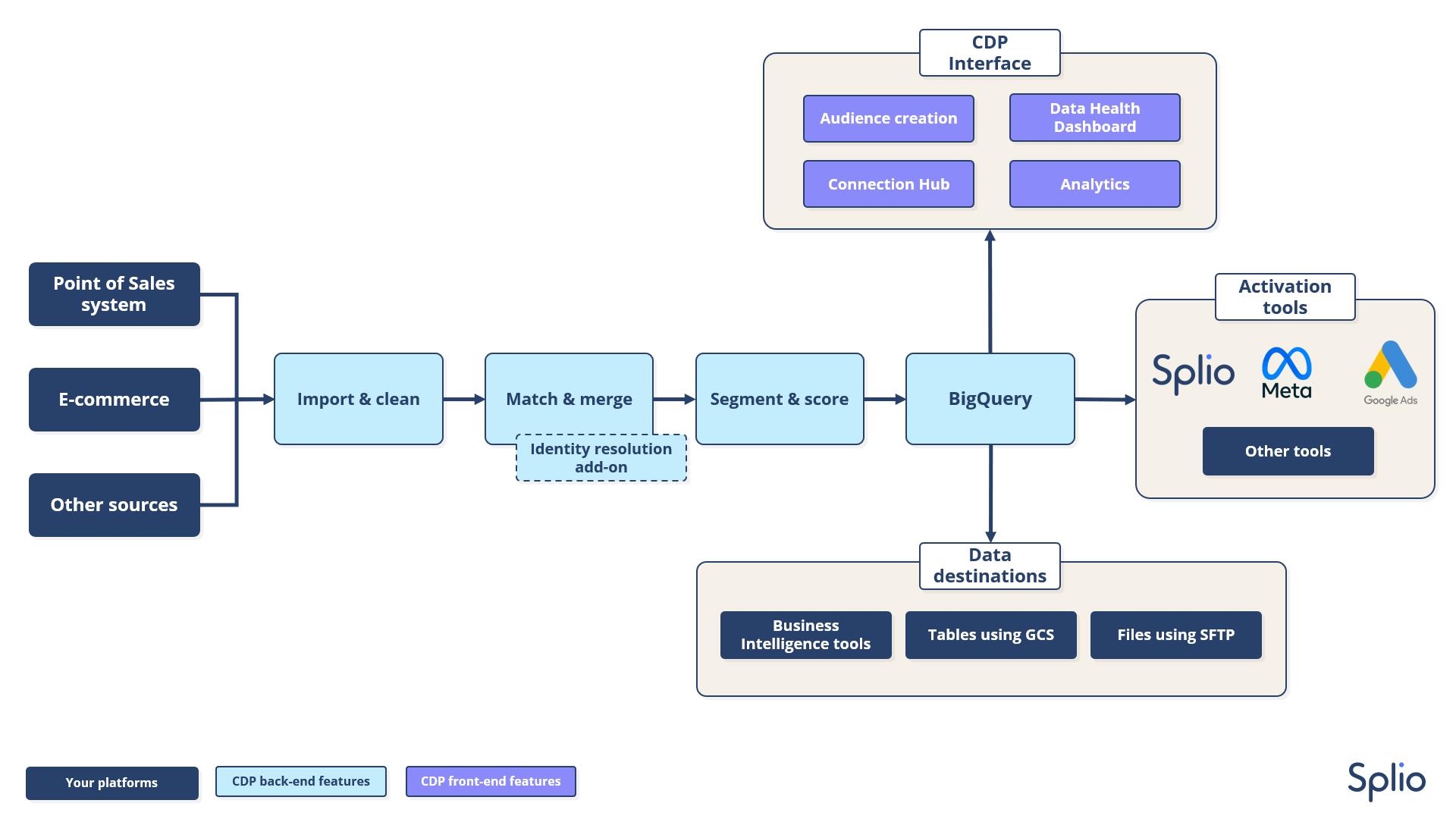

The Splio CDP allows you to benefit both from a CDP with advanced data normalization features and from a cutting-edge predictive engine based on deep learning algorithms. Thus, your CDP is your data single source of truth, where all your data sources are gathered and cleaned, both for the activation of your client’s database and for all your Business Intelligence needs.

This means we are not selling our CDP on a stand-alone offer: either we sell it in combination with the predictive features, or for the clients who don't have the need for predictive marketing, we sell it in a bundle with our Marketing Automation platform.

In this introduction, you will find a definition of the main concepts used in the rest of the technical documentation.

Splio Predictive engine

Deep learning algorithms use the data uploaded to help you optimize your marketing strategy. This is materialized in three main feature types:

- Find the best users to send your campaigns to.

- Help you target and analyze your database with Custom Audience Filter attributes, such as Propensity to buy and Customer Lifetime Value .

- Find insights about your users and offers in data visualizations such as Audience Mapper, Efficiency Map.

Splio CDP

There are as many CDP definitions as CDP editors. Here is ours:

- Our CDP is a database able to receive data from multiple sources, handling data normalization and cleaning.

- Then the CDP is able to perform identity resolution and deduplication on users and other objects (as an add-on, if needed), based on business rules to assign a master ID for each of them. The users with a master ID are then propagated to other objects such as purchases and carts.

- The customer knowledge obtained from this database is deepened by the calculation of pre-computed attributes.

The main goal of these features is to obtain a database as clean, true, and dense as possible.

Splio CDP Data Input & Output

Splio CDP is meant to receive data from several sources and send transformed data to several destinations:

- Sources: The CDP receives data from your e-commerce and your Point of Sales system (and more) to build a clean and unified database for all your marketing use cases. The methods to import data into the CDP are described in this page.

ℹ️ By default, the data ingestion process in Splio CDP happens once a day. If needed, we can go up to once every three hours.

- Destinations: The data is sent to all your tools which can make use of it, thanks to a set of connectors, and can even be read directly by your tools thanks to Google Big Query:

- Business Intelligence tools to analyze your clients and prospects with clean data

- Activation and advertising tools to enable advanced and precise targeting: Marketing automation, Mobile Wallets, Meta, Google ads...

Data requirements

The CDP Data model is meant to define the best practices and guidelines for the data you are importing into the CDP. We describe in this page the file formats, headers, and names for each of the entities we can ingest, as well as the fields composing them.

Data architecture

On this page, we give access to the different steps of data transformation happening in the CDP, to obtain a clean and augmented database.

Domain and Business unit management

On this page, we explain how enterprise-sized companies can set up a siloed Splio CDP environment matching their needs.

Updated about 1 month ago